Data quality: four strategies to ensure data quality

Why data quality is a struggle, and the journey to data quality.

The world is full of the buzz around big data. It is hard to miss it and miss the huge promises being made around the potential. Fact based decision making is at the heart of professional management. Hundreds of thousands if not millions of analysts are crunching numbers to impress, gain insight and find those elusive gold nuggets. Unfortunately, the wind is often taken out of their sails through the trials and tribulations of trying to get the baseline and core numbers right. Many good data initiatives break down, or are never started because people are daunted by the difficulty of getting the base data in place.

Why do organisations struggle to ensure data quality?

Data is regarded as something which “has to be done”

There has been so much hype about data that all organizations know there is value in collecting it. However, there is no point simply collecting data unless it is going to prove its value and help an organization’s long term strategic goals. Too often, organizations collect data for the sake of it.

For many people who are asked to enter the data it is a thankless task. Those entering the data get too little value, personally, from getting it right. There is no incentive to do the job well.

Data is often not uniform and is stored in different places

Often this is simply due to upfront laziness. However, “garbage in means garbage out”. For example, on one project a client had a database of over 30,000 items of IT hardware spend. The vast majority of the items were duplicates and the data could be reduced a couple of hundred items that accounted for well over 95% of the spend. The company had made its life much harder by not having clear rules about how data was to be collected and stored.

People look at the data in the wrong way

“Table blindness”: Excel is great for many things but getting real insight from data is time consuming . It is difficult to see when and where the data points are wrong. A picture tells a thousand words. A table of data groans with noise. It is hard to see patterns; to remember; to see outliers; to bother seeing it all.

Poor training and technical skills. How many people really get trained in how to work with data? Yes, lots get trained in how to write programs or even how to build an excel model. However, few really get taught how to think about data and why they might get something out of it.

Cleaning data is time consuming

Even if you know what is wrong with a dataset it is physically hard work to clean. It is made even harder by the fact that those cleaning it are often not in a position to know what the correct data would look like. Therefore the process of the analyst finding incorrect data, finding the relevant data owner, and then updating the data is extremely time consuming.

The journey to data quality

In light of this I argue there are four simple strategies to ensure your data is clean and stays clean. They must be used together as none of them work or are sufficient on their own.

1. Make the process of entering and cleaning data useful and valuable for all levels of the organisation

Top levels: Make sure you can answer the key questions; what is the business reason for having the dataset? What question or decision do you intend to answer or make from it? If you have wrong or incomplete data, what will the consequence be? The bottom line is the answers should be pretty compelling otherwise it will be a waste of time.

Lower levels: For those performing the day to day task of entering and cleaning data you have to answer the data owner’s questions; “If I’m going to input data, what is in it for me?”. There are two methods here; firstly ensure the data owner can see the value they are contributing/getting returned. Secondly make it a game. For example on one project I worked with a network of hospitals wanting to clean their data. In order to incentivize each one we put up a map of how each one was progressing and who was top week by week. The competition created made the process fun and part of a team effort.

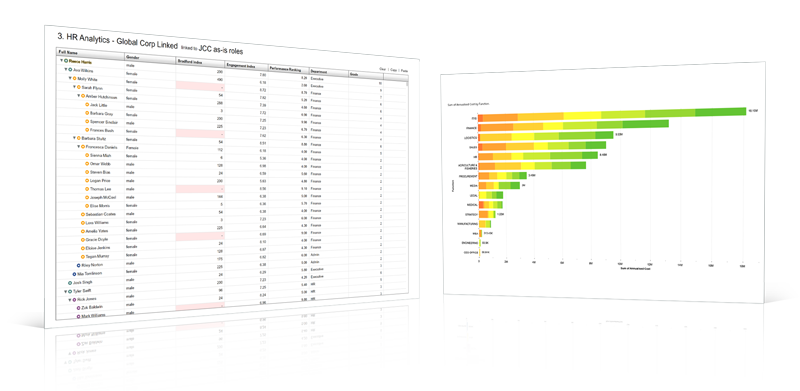

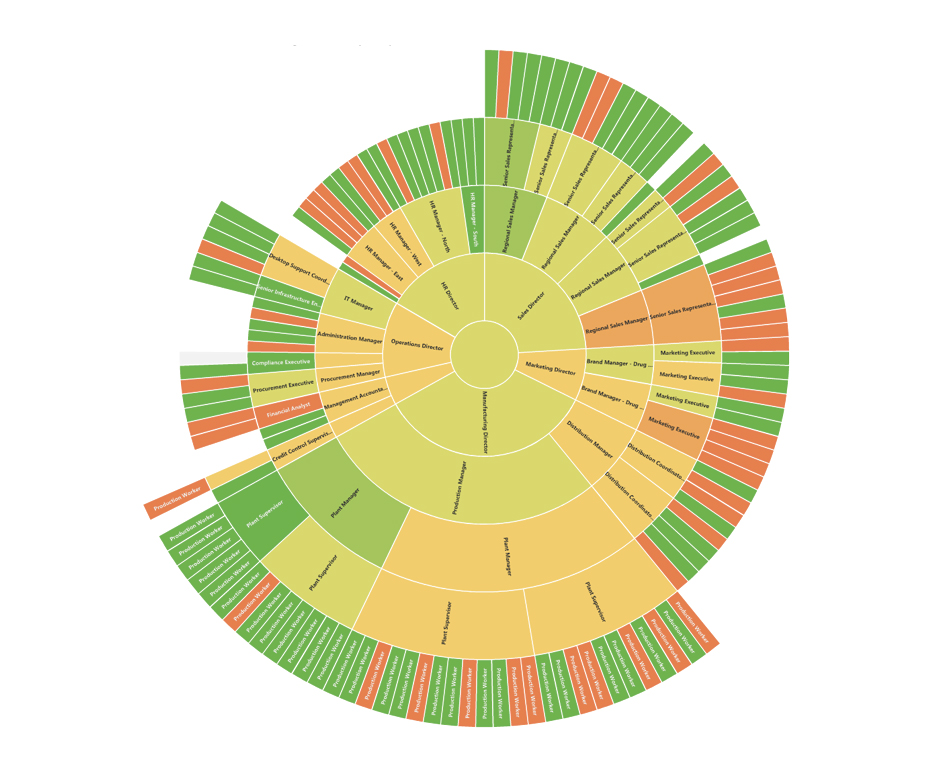

2. Visualise

Make it easy to see the data from all angles. This not only allows you to slice the data and get insight, but makes it easier to see data errors. Either way you move forward.

3. Crowd source

Often those who are in the best position to fix data are the ones who want the maximum insight from it but do not have the time to correct it. Therefore make it easy for them to be able to change data. Firstly, make the process mobile. Often the best time to do these things is when you are in transit or a little bored. If the data is available at all times and all devices such as smartphone and tablets it makes it easy to review charts and add insight. Secondly make data easy to change. If you need information from someone keep the amount of work required broken into 2-3 minute chunks of time. If longer, ensure you show progress; have a strong reason why and track progress. For example, sending out a webform takes two minutes to complete and gives you instant updates on your data.

4. Create rules and clean the meta data centrally

Often multiple people are collecting and cleaning data and they may be working in different offices or even different countries. You will therefore need clear policies on how data is collected. For example, often the lowest level of data is ok, but it is how the data is categorized or tagged. A common and easy one to grasp is Gender. The “Male” gender is often spelt Male; male; m; 0 or 1; MALE…and that is in English alone.

The journey to data quality does not have to feel like you are pulling teeth!

There is a perception that data as a process is incredibly painful. However, it doesn’t have to be. It can be far more value adding and engaging. Make it easy. Give scores. Make it fun. Make it a game. Drive insight from the process. Create mechanisms for keeping it clean and make those mechanisms easy. If you follow the four rules, I promise you will never look back.