Data quality: crossing the data chasm

How you introduce and sustain a global HR system in practice to ensure the long term benefits which come from data quality.

Four centuries ago, the East India company became one of the first multinational corporations in existence. Since then, organizations have followed a general trend of becoming increasingly fluid, spread across multiple international borders. This has been facilitated by advancements in technology, namely increasing speed of communication and ease of travel. These changes are remarkable. It’s hard to believe that when my ancestors made the journey from Scotland to New Zealand in 1840, it took them almost 4 months, involving three deaths and six births. Now the greatest effort involved in travelling to the other side of the world is baggage reclaim!

New forms of communication such as email and Skype have also bridged the geographical gap but the result is a data chasm. Instant communication does not guarantee alignment between offices, and HR is now playing catch up to collect meaningful up-to-date data. Simple questions such as “How many FTEs do I have?”, “where are my FTEs based?”, “What is the total payroll across my organization?” are proving very hard to answer. All organizations are trying to address this situation but it is an area in which many if not all are struggling.

This blog focuses on how you introduce and sustain a global HR system in practice to ensure the long term benefits which come from data quality.

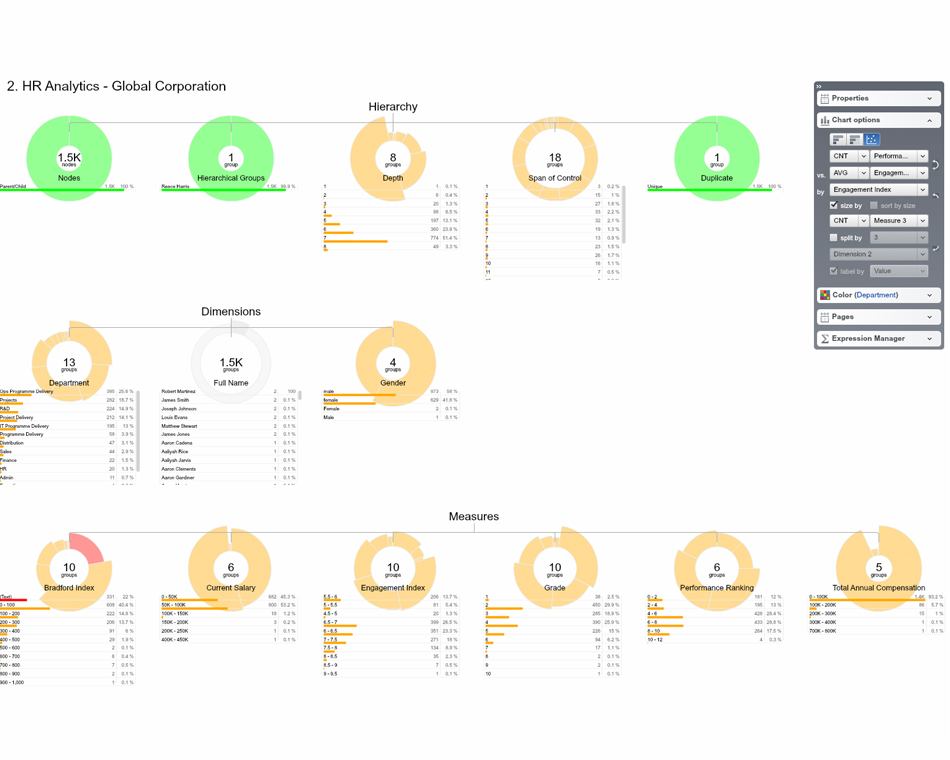

First, the HR system and process must be able to perform a number of functions alongside the ability to collect, integrate and analyze data.

1. Auditability

Collecting data from different offices and sources around the world means a large number of data changes on a regular basis. Therefore you need an HR system which tells you when data is changed, who has changed it, and what and how it has changed. This is not only useful but for many companies a legal necessity due to corporate and accounting regulations such as the SOX Federal law in the United States.

2. Governance

If you have a global system it must be governable. You don’t want anybody making changes otherwise it is out of control and risk/operations/finance will be having kittens! There has to be a framework and rules around who can make alterations alongside a system of checks and balances.

3. Context

Linked to the issue of governance, the system must be able to collect data as part of an overall structure, not as stand alone sets of information. Knowing where data sits not only influences governance – who can access or change the information, but also helps influence the decisions you are making and the insight you are looking to gain.

However, even if you have the best HR system in the world it can be of little use if it is not implemented and used in the right ways. Therefore, using the four strategies from last week’s blog here are four steps to implementing a successful process towards data quality.

Conversation is key

This is the “What’s in it for me” question. No manager-employee relations are the same between two people let alone across whole countries; everyone’s drivers and motivations are different. One of the major challenges facing organizations is the tension between the need for global standards while allowing for and respecting autonomy and culture in local offices. How do you get the balance right? Conversation is needed at all levels of the organization to find out why the data is important from a top level perspective and how this can be made relevant for each individual and office involved in the process. You have to ensure benefit to create change.

For example, on a recent project a leading electronics distributor centralized fragmented data from 6,000 employees across 29 countries. One office in South America stored all their payroll information in a leather bound ledger. It worked for them because they were a small office and could track all their information very easily. However, this practice was obviously not very useful for global HR. By explaining to the office the value of having data on a global scale, and the time they could save in the long run they began to see the upsides and not only started uploading data regularly but transferred all their existing data from their ledger into the system.

Create rules

Having gained support behind the project create rules which span across the organization as a whole. Here are several, among others to think about:

- Who has the ability to collect, input and alter data

- How is data being collected

- How often should data be uploaded

For all of these rules there is a trade off between flexibility and structure. These are not mutually exclusive but they do need thinking about. In the project with the electronics distributor they struck a balance. They assigned one data owner for each office who uploaded data to the global system every week. The system would show when a data owner had made an update so that those falling behind could be chased up. These rules were quite strict due to the need for regular updates for head office. However, by integrating data automatically localized inputs such as currency could be translated into normalized global standards, ensuring the minimal amount of change possible for local data collection.

Crowd Source

Making data collection and collation easy is vital to ensuring sustainable data quality. Using webforms and short surveys allow dataset owners to collect specific information from data owners. Creating merges which allow for automatic collation on a local level between the dataset and webforms, and on a global level between the centralized dataset and local datasets means the benefits of crowd sourcing are maximized with minimal effort.

Visualize

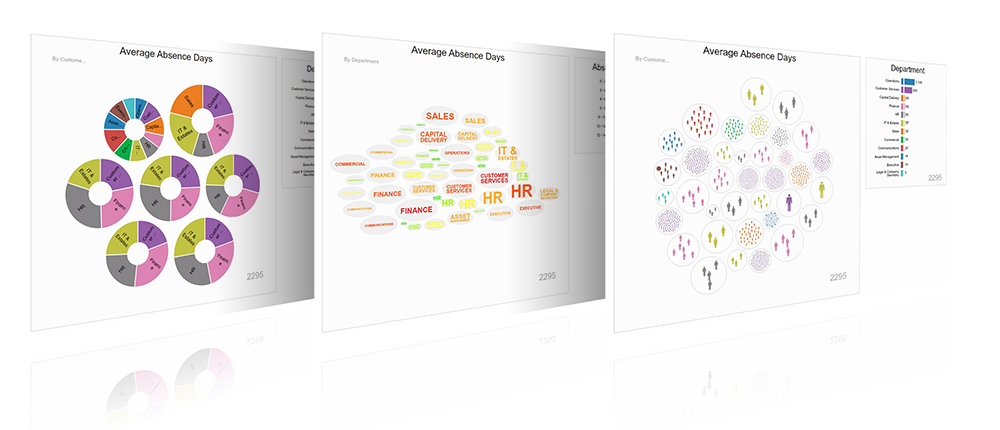

With data coming in from so many sources, and in such volume, the only way to make sense of it is to visualize it. This allows HR to drill down into specific countries or offices and gain relevant insight into its workforce. It also allowed for ongoing data cleansing as outliers were easy to pick up in image form. Visualizing data is also key to moving towards data driven decision making.